Buy A Modem

Ask HN: What happens when humans become as dumb as AI?

The existential risk that has received much attention is machines eventually becoming as smart as people, and then smarter still. What I see in the news, and, anecdotally, around me is rather the opposite. Thinking is hard. People, even those who went through rigorous university training to develop their critical thinking, are increasingly outsourcing thinking to machines. SOTA models don't need to get any better to catch up with us, they just need to wait. And maybe not even that long. I w

Show HN: I embedded 685M public texts in 32 minutes (on 8x A100, Rust, TensorRT)

Quick note on how it works and how I've done my batch embedding engine IgniteMS.The whole thing runs as one process using Rust, reading input, tokenizing, packing batches, keeping the queue full. TensorRT handles inference. Python is only as a wrapper.I built it this way because when you use more than couple of GPUs, the GPUs stop being the problem. CPU cannot feed them fast enough. One A100 can go through batches faster than Python can tokenize and feed, so the GPU just sits there idle wai

My Humble AI Market Prediction

In 12 - 18 months we get local models that match the capabilities of 4.6.The overall capabilities will peak, and we all get highly efficient code forges in a box available in everyone's home. AI the is a generator, an advanced compiler, not a place for runtime. It's best used as a powerful hammer aimed at one thing until the structure is 'built', then we put the hammer away and enjoy the spoils.These data centers are being built on the assumption that billions of people will

Show HN: Hitoku Draft – Context aware local assistant

Hi guys.I have been working on Hitoku Draft, an open-source, voice-first AI assistant that runs entirely locally. I posted about it already, and now it has also transcription with voice editing. Looking for feedback, as I found that outside tech circles other people still do not use this tech much.It's context-aware, in the sense that it reads your screen, documents, and active app to understand what you're working on. You can ask about PDFs, reply to emails, create calendar events, us

Anthropic Urges Global Pause in AI Development, Flags 'Self-Improvement' Risk

WSJ

Anthropic is calling for top artificial intelligence labs to weigh slowing the pace of development, suggesting that AI systems are advancing so rapidly that they may soon be able to improve themselves without human intervention in ways that could pose significant societal risks.The ability to slow global AI development would “likely be a good thing,” the company said Thursday in a blog post that disclosed internal data documenting how quickly its most advanced models are improving.The post,

Show HN: Formally verified polygon intersection – Opus 4.8 oneshots, prev failed

To my knowledge, this is the first formally verified implementation of an intersection algorithm for polygons.The experience of working with AI agents on this project changed a lot with recent model releases, as I describe in the readme. Opus 4.8 is able to provide algorithm implementation with formal proof in one shot, whereas previous models required me to provide proof strategies in multiple steps.Trust in the correctness comes entirely from the Lean checker and human review of a small specif

Routers, Modems, and Why Knowing the Difference Is Crucial

Your modem and router are the dynamic duo you need to get online, so long as you don't mistake one for the other.

Troll Face Realism GIF

Troll Face Realism GIF

Show HN: Forge – Guardrails take an 8B model from 53% to 99% on agentic tasks

Hi HN, I'm Antoine Zambelli, AI Director at Texas Instruments.I built Forge, an open-source reliability layer for self-hosted LLM tool-calling.What it does:- Adds domain-and-tool-agnostic guardrails (retry nudges, step enforcement, error recovery, VRAM-aware context management) to local models running on consumer hardware- Takes an 8B model from ~53% to ~99% on multi-step agentic workflows without changing the model - just the system around it- Ships with an eval harness and interactive das

Show HN: Dari-docs – Optimize your docs using parallel coding agents

It’s well known at this point that documentation needs to be optimized for AI agents - we’re all pointing our Claude Code / Codex / Pi agents at documentation, and expecting the models to figure out how to implement a product.This, however, changes the entire optimization problem when writing documentation. Good documentation now becomes more objective - you are solving the very concrete problem: can a dumb harness running the dumbest model implement this reliably?Humans can typically

"Subligence" – proposed coinage for LLM "intelligence"

Call me a snowflake, but I propose that those of us who don't believe that AI is actually intelligence look for a term to refer to its "thinking" that isn't "intelligence", because this helps our weak minds keep things straight.subligence /sʌbˈlɪdʒəns/ n.A lesser or rudimentary form of intelligence; the capacity to respond, select, or adapt without full understanding.

The semblance of mind in animals, machines, systems, or inanimate things.

Discernment ben

Show HN: Interactive first-principles climate physics simulation with explainer

A 3D visualizer of earth's climate in the browser. Introduces physics step by step so you can watch each process unfold as a piece of the overall climate.

I built this over 6 months, almost entirely with AI, mostly Opus 4.6 in Claude Code. SF weather made no sense to me (Barely any seasons? September is the warmest month?) and I wanted to understand it better myself. This is a polished version of the app I'd want for myself, adding physics layer by layer to isolate the impact of each p

Instant YouTube channel analysis using public metrics

Hey HN!I’ve been spending a lot of time around creator communities recently, and one thing I kept noticing was how difficult it is for smaller YouTubers to understand their channel growth clearly. Most analytics tools are either overloaded with dashboards, require full account access, or are built mainly for large creators and agencies.So I built AAFY — a simple creator growth companion focused on clarity, privacy, and quick insights.The idea is straightforward:

you enter any public YouTube hand

ASK HN: AI was always a probability problem?

AI was always a probability problem.. If we look at the emergence of sequence models (or statistical learning in broader sense), they predict the next sequence based on the accumulation of knowledge that humankind has acquired over the years.. The scientists who were tackling this problem(creating general AI) before thought of approaching it by creating different simulations for all kinds of problems, which would have led to infinity anyway, that is why it never worked. The solution was simple.

Show HN: ThinkLLM, A knowledge graph of AI models (HTTPS://thinkllm.dev)

As an Enterprise Architect I work with Capabilities, Use Cases and Value Maps amongst other things. Hugging Face is a great resource for tracking down AI models but is mostly technical and quite detailed. I built ThinkLLM because I thought that as more and more people are going to be using LLMs it would be easier to find AI models by capabilities and use cases than simply browsing long lists of models.The website has nothing extraordinary or special. Is just a different view on existing data. It

Show HN: I built a powerful RAG and knowledge graph agent that runs locally

Claw-Coder is an AI agent that runs locally on your laptop and has access to powerful tools instead of configuring claude or codex to use a local model just use claw-coder.Why was claw-coder created?

Answer: To solve the problem of privacy and security. When you use an agent that is configured with a cloud model like codex, cursor, Claude etc. You are not just getting the agent but you are giving up your codebase to train an llm which is a bit concerning and this reduces trust in the technology

Show HN: I built a RAG and knowledge graph agent that runs locally

Claw-Coder is an AI agent that runs locally on your laptop and has access to powerful tools instead of configuring claude or codex to use a local model just use claw-coder.

Why was claw-coder created? Answer: To solve the problem of privacy and security. When you use an agent that is configured with a cloud model like codex, cursor, Claude etc. You are not just getting the agent but you are giving up your codebase to train an llm which is a bit concerning and this reduces trust in the technology

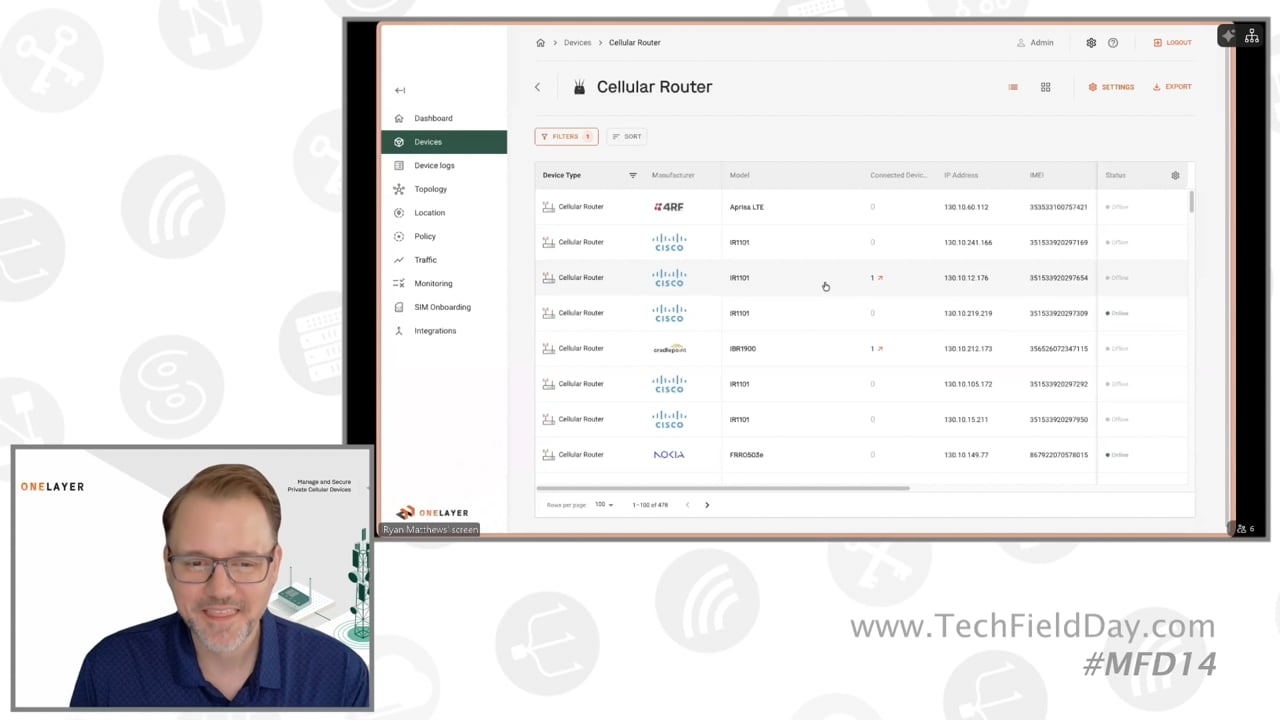

OneLayer Bridge Live Demo

The OneLayer Bridge live demo illustrates the transition from the garbled view of a traditional cellular core--filled with 15-digit IMSI and IMEI identifiers--to a device-aware management platform. Without OneLayer, administrators are forced to manage critical infrastructure through abstract numbers, making the activation or deactivation of SIM cards a high-risk manual task. OneLayer replaces this with a zoomed-out, user-friendly interface that identifies devices by their actual function and mod

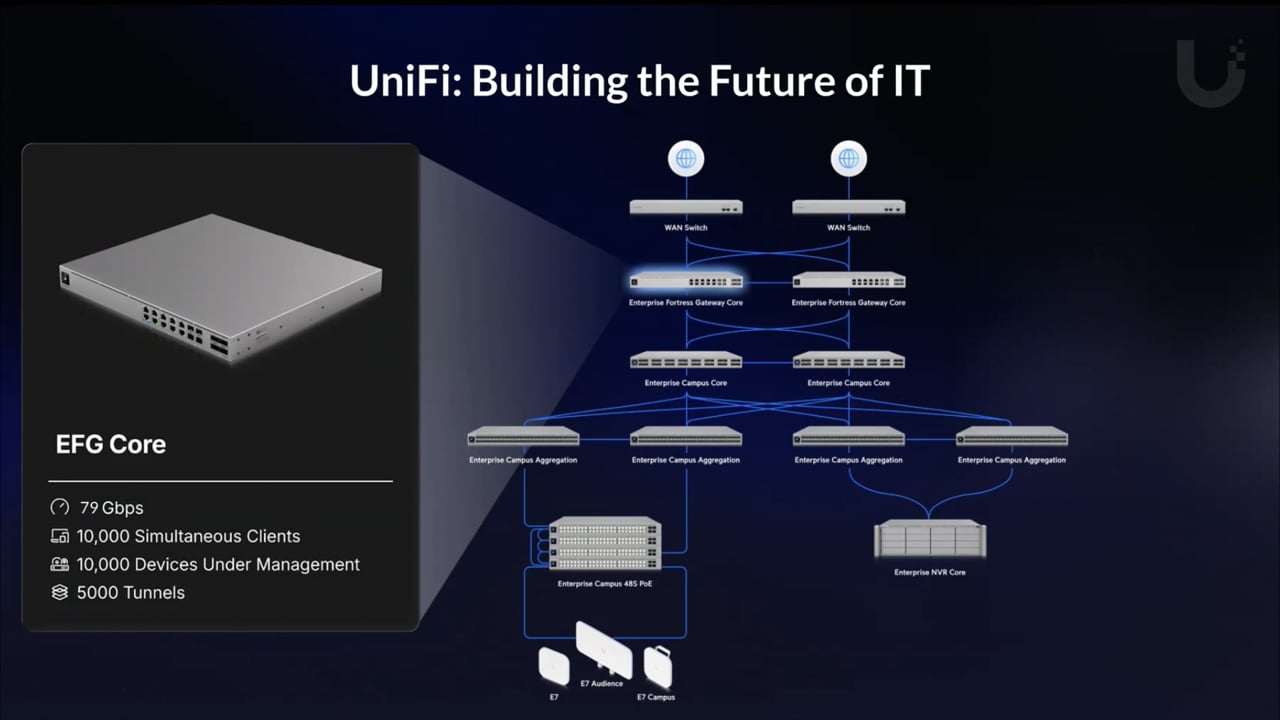

Ubiquiti Reliable Networking at Scale

Thomas Hildebrand detailed Ubiquiti's hardware strategies and product updates designed to support deployments ranging from large multi-tenant installations to massive single-site environments. The presentation began by highlighting a new, small-scale access point utilizing a unique RF profile, featuring a built-in 90-degree directional antenna with a 10 dB gain on the 5 GHz band, optimized to provide long-distance wireless uplinks in low-density deployments like parks. Hildebrand then addressed

Have you tried turning it off and on again? Telstra’s AI already did

Telstra has built an AI-powered system that, over the past few years, has been turning customers’ modems off and on again – saving a million help desk calls.